News and updates

June 16, 2025

March 26th, 2025

- Final results have been released and they are available here.

March 17th, 2025

- Validation 4 results have been released and they are available here.

March 13th, 2025

-

Validation 4 and Alternative Submission Options

Dear participants,

we are happy to anounce the start of the Validation 4 checkpoint. You can find the data at the Participate/Get Data section of the CodaLab page. Please submit your solutions until 13.03.2025 23:59 UTC+0.

Due to certain issues with CodaLab website and in preparation to the final Testing phase we provide an alternative way to submit your solution. You can fill out the form below and attach the resulting images as well as your docker solutions. Docker containers are not required for this checkpoint, however we encourage you to provide them as it will streamline the process of running your final solutions. Instructions can be found in the form.

Responses submitted through the form will be run manually. Due to that, participants are limited to two solutions per day. Please only employ this method of submission when submitting through CodaLab is impossible. The results will be maintained in a table on the CodaLab page. Please note that it will not be updated in real-time.

Solutions sent through both CodaLab and Google Forms will be eligible for participating in Validation 4 checkpoint MOS evaluation. The last solution (between CodaLab and Forms) will be used for evaluation.

We remind you that submitting your docker solutions as well as reports will be necessary for participating in the Testing phase.

We wish you the best of luck.

March 11th, 2025

- Validation 3 results have been released and they are available here.

March 10th, 2025

- Delay in MOS Scores for Validation 3

- Due to technical difficulties, the release of MOS scores for Validation 3 has been delayed. We sincerely apologize for this inconvenience and appreciate your patience. The results will be made available as soon as possible.

- Additional Validation 4 Checkpoint

- To provide you with additional feedback on your solutions, we will conduct a Validation 4 checkpoint. The data for Validation 4 will be released on March 11th, 2025, and the deadline for submission will be March 13th, 2025.

- Release of Additional Training Data

- To support further improvements to your models, we have decided to release additional training data. Specifically, the DSLR files from Validation 2 are now available for participants. You can find the download link in the "Get Data" section of CodaLab.

March 3rd, 2025

- The third validation dataset is available. Submitting solutions to calculate the mean opinion score for the resulting images is possible until March 4th at 23:59 GMT+0.

February 20th, 2025

- A new validation dataset is available.

February 10th, 2025

- The validation server is online.

February 5th, 2025

- Train data (input and output) and validation data (inputs only) are now available through the registration form or on Codalab.

February 5th, 2025

- Train data (input and output) and validation data (inputs only) are now available through the registration form or on Codalab.

January 24th, 2025

- The registration form for participating in the 2025 challenge is available.

Motivation for this challenge

The process of capturing and processing images taken by cameras relies on onboard processing to transform raw sensor data into polished photographs, typically encoded in a standard color space such as sRGB. Night photography, however, presents unique challenges not encountered in daylight scenes. Night images often feature complex lighting conditions, including multiple visible illuminants, and are characterized by distinctive noise patterns that make conventional photo-finishing techniques for daytime photography unsuitable. Additionally, widely used image quality metrics like SSIM and LPIPS are often ineffective for evaluating the nuances of night photography. In previous editions of this challenge, participants were tasked with processing raw night scene images into visually pleasing renders, assessed subjectively using mean opinion scores. These efforts have driven significant advancements in the field of night image processing.

This year's challenge introduces a new and fundamentally different approach while retaining the use of the mean opinion score as an evaluation metric. Moving away from purely subjective aesthetics, the 2025 challenge will adopt a potentially more objective framework by leveraging paired datasets captured with a Huawei smartphone and a high-end Sony camera using a beam splitter to ensure identical perspectives. Participants will be provided with raw Huawei smartphone images as input and corresponding processed Sony camera images as the expected output. The goal is to develop algorithms that transform the raw Huawei images—characterized by higher noise levels, vignetting, and reduced detail—into outputs that convincingly resemble the high-quality processed Sony images.

Mean opinion score will be employed to evaluate submissions, ensuring that human viewers, rather than automated metrics that might be exploited, determine how convincingly the processed Huawei images align with the processed Sony images. This focus on human perception underscores the importance of developing rendering algorithms that produce results that are not only technically accurate but also perceptually closer from the target images.

By addressing the unique limitations of mobile phone raw images and the inherent complexities of night photography, the 2025 challenge seeks to advance the state of the art in image processing. The combination of objective input-output alignment and perceptual validation through mean opinion scores provides a robust framework for fostering innovation in mobile-focused night photography rendering where objectivity has a more pronounced role, but is not expected to be abused in anyway due to the final subjective oversight.

Challenge Goal and Uniqueness

This challenge tackles the complexities of nighttime photography by leveraging paired datasets of raw Huawei smartphone images and processed Sony camera images, providing a clear ground truth for evaluation. The goal is to develop algorithms that process raw Huawei images to convincingly resemble the high-quality Sony outputs, addressing a long-standing challenge in computer vision.

Night photography is vital for applications like surveillance and security and also has artistic significance in creating stunning images. By combining objective ground-truth comparisons with human perception through mean opinion scores, the challenge ensures that results are both technically accurate and visually convincing.

This unique framework bridges mobile device constraints, low-light conditions, and human-centric evaluation, advancing the state of the art in night image processing.

Challenge data

The participants in this challenge will be granted access to raw-RGB images of night scenes, which have been simultaneously captured using a Huawei smartphone sensor and a Sony professional camera sensor by means of a beam splitter. The images are encoded in 16-bit PNG files. Accompanying these images will be additional meta-data, provided in JSON files. The challenge will commence with the provision of the initial images to participants, which will be utilized for the development and testing of their algorithms. Data access will be granted upon registration, and further information can be found on the registration form at the bottom of the page. Additional images will be made available throughout the challenge, and further details can be found in the information provided on the evaluation and leaderboard. As an added resource, the organizers have also provided code for a baseline algorithm, as well as a demonstration, on GitHub.

Evaluation/Leaderboard

More information on evaluation and leadersboard formation will be given soon.

Submissions

The evaluation of the participant’s solutions in this challenge will consist of four checkpoints: three validation checkpoints during the contest and one final checkpoint at the conclusion of the contest. It is worth noting that only the final checkpoint is mandatory, and the validation checkpoints are optional. As such, new participants may join the challenge at any time before the final submission deadline, provided they fulfill the other requirements of the challenge.

The dataset for the first validation checkpoint consisting of 50 images will be released at the start of the challenge and will only be evaluated using SSIM and PSNR, i.e., the mean opinion score will not be used here. The main goal here is for the participants to be able to conduct a simple evaluation of their solutions.

The datasets for the second and third validation checkpoint will be released according to the timeline and each will consist of 125 images. Both objective metrics such as SSIM and PSNR as well as the mean opinion score will be used for evaluation.

The dataset for the final test checkpoint will consist of 150 images and the scoring will be the same as for the second and third validation checkpoints. The final checkpoint is mandatory for all participants who wish to be included in the final report and be eligible for prizes.

Submission rules

During the solution submission, the participants will have the option to make their Docker container open, i.e., publicly available after the challenge. This will be a prerequisite to be eligible for receiving winner certificates should they win one of the first three places and if they wish to be included in the final report.

It is important to note that part of the evaluation of this challenge is subjective in nature, and the challenge organizers have designed it to be as fair as possible, given the nature of the task. The challenge organizers reserve the right to modify the evaluation procedures as necessary to improve the fairness of the challenge.

It should be noted that only those solutions are allowed that use open components available to all participants in the competition (at the time of the start of the competition). Proprietary solutions will be disqualified.

One team is not allowed to make multiple accounts. If such a situation is detected, all accounts of the team can be disqualified.

It is allowed to use additional training data if a team has access to it. However, it would later be fair to report the use of additional data in the solution description, even though it is not mandatory.

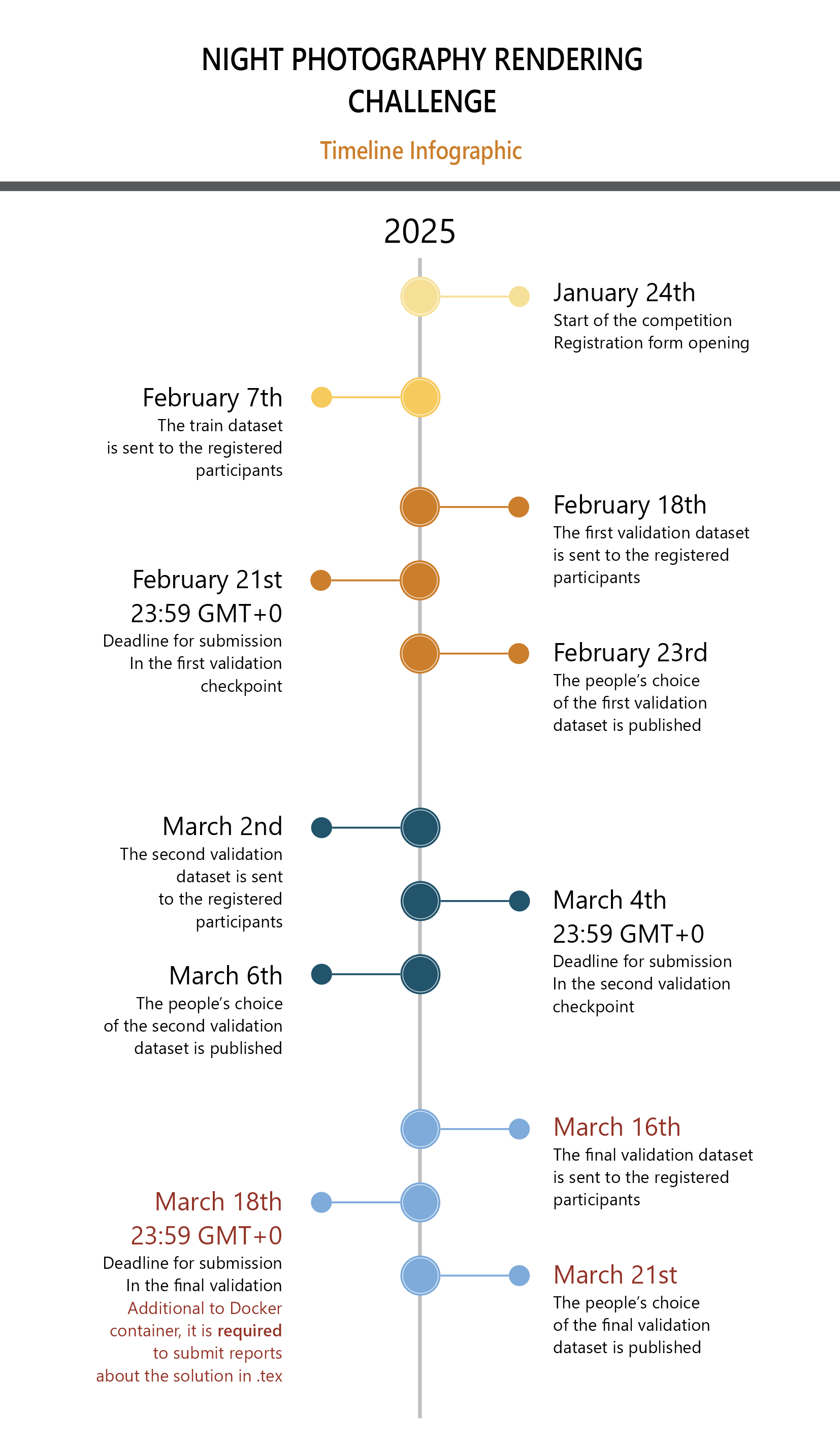

Timeline

* Please note that the timeline can be slightly changed, so it is advised to check the challenge web page over time.

Registered Teams

The list of registered teams will be available here.

Reporting

In order to be eligible for the prizes, the participants will be required to send code and reports about their solutions in the form of short papers during the submission. If this report is not submitted, the participants that would otherwise win a prize will be passed over.

Prizes

There will be two prize categories:

- Objective metrics (best score on Codalab)

- Subjective metrics (best score based on MOS)

Winners will receive a winner certificate and will have an opportunity to submit their paper to NTIRE'2025 and participate in the common report which also will be submitted to CVPR workshop.

Q&A

If you still have any questions, please send an email: nightphotochallenge@gmail.com

Organizer

Co-organizers

- Egor Ershov

- Sergey Korchagin

- Artyom Panshin

- Arseniy Terekhin

- Ekaterina Zaychenkova

- Georgiy Lobarev

- Aleksei Khalin

- Vsevolod Plokhotnyuk

- Denis Abramov

- Elisey Zhdanov

- Sofia Dorogova

- Nikola Banić

Partner

- Radu Timofte and Georgii Perevozchikov