News and updates

March 21st, 2023

- The final leaderboard based on the Toloka platform comparisons is now available on the final leaderboard page.

- The final leaderboard based on the photographer's opinion as well as its explanation in more detail is now available on the final leaderboard page.

- The leaderboard based on the Toloka platform comparisons is now available on the second leaderboard page.

- The leaderboard based on the photographer's opinion as well as its explanation in more detail is now available on the second leaderboard page.

- The information on how to access the validation for the second leaderboard dataset has been sent to the participants' e-mails and added to the registration form responses.

- Additional information is available on the first leaderboard page.

- The leaderboard based on the photographer's opinion as well as its explanation in more detail is now available on the first leaderboard page.

- The leaderboard based on the Toloka platform comparisons is now available on the first leaderboard page.

- The information on how to access the validation dataset has been sent to the participants' e-mails and added to the registration form responses.

- Additional information is available on the first leaderboard page.

- Additional information about registered teams was added.

Motivation for this challenge

The process of capturing and rendering images captured by cameras involves applying onboard processing to convert raw sensor images to the final photo-finished image, which is then encoded in a standard color space such as sRGB. However, images captured at night present unique challenges that are not typically encountered in daytime images. For example, while it is often sufficient to assume a single global illumination for daytime images, night images often have multiple illuminants, many of which are visible in the scene, making it challenging to determine the best illumination correction to use for night image rendering. Additionally, tone curves and other photo-finishing strategies that are commonly used to process daytime images may not be appropriate for night photography. Furthermore, commonly used image metrics such as SSIM and LPIPS may not be suitable for evaluating night images. This lack of established best practices and limited research in the field of night photography are significant obstacles. The primary motivation for this challenge is to encourage research and advance the field of image processing for night photography.

Challenge Goal and Uniqueness

Our challenge presents a specific problem in the field of computer vision as we do not have actual ground-truth images. The task at hand is to develop a procedure for creating realistic and visually pleasing photographs of night scenes, an area where capturing the details, colors and atmosphere of a night scene can be quite difficult, and has not been fully solved yet. Night photography not only holds technical importance in fields like surveillance and security, but also holds a unique artistic beauty that can lead to breathtaking and awe-inspiring photographs. Submissions will be evaluated by mean opinion scores from observers and further judged by a professional photographer on their visual appearance to evaluate the effectiveness of the developed procedure.

SotA

SotA

Baseline

Baseline

Challenge data

The participants in this challenge will be granted access to raw-RGB images of night scenes, which have been captured using the Canon EOS 7D sensor type and are encoded in 16-bit PNG files. Accompanying these images will be additional meta-data, provided in JSON files. The challenge will commence with the provision of 50 initial images to participants, which will be utilized for the development and testing of their algorithms. Data access will be granted upon registration, and further information can be found on the registration form at the bottom of the page. Additional images will be made available throughout the challenge, and further details can be found in the information provided on the evaluation and leaderboard. As an added resource, the organizers have also provided code for a baseline algorithm, as well as a demonstration, on GitHub. In addition, we provide the dataset which comprises a collection of color-checker RAW images. Pictures of the color checker taken under various lighting conditions make up the data. The emitted light spectrum was also measured for each frame using a spectrophotometer with 109 steps ranging from 370 to 730 nm. This dataset is available here.

Evaluation/Leadersboard

The current leaderboard is available HERE.

The evaluation of the participant’s solutions in this challenge will consist of three checkpoints: two validation checkpoints during the contest and one final checkpoint at the conclusion of the contest. It is worth noting that only the final checkpoint is mandatory, and the validation checkpoints are optional. As such, new participants may join the challenge at any time before the final submission deadline, provided they fulfill the other requirements of the challenge.

Mean opinion scores will be obtained through visual comparison, carried out using Toloka (similar to Mechanical Turk). Toloka users will rank the solutions in a forced-choice manner. It is important to note that Toloka primarily relies on observers from Eastern Europe and and Central Asia to perform the image ranking, and as a result, there may be a cultural bias in terms of the preferred image aesthetics by the observers. Toloka users will not be made aware of the identity of the participants. An example of this evaluation process can be found at a specified link.

The results obtained during the validation checkpoints will provide feedback to the challenge teams on the quality of their solutions. During each validation checkpoint, 50 new test images will be provided to the participants. Each participating team will be able to submit up to two distinct solution image sets, with each set consisting of exactly 50 images, and will be intended to help the participants test the behavior of different solutions.

For the final evaluation, the submission must include 50 processed images in the JPEG format, as well as a Docker container that contains the runnable solution for reproducing the submitted results. The solution will be evaluated on the public test dataset, which will be sent to the participants, and on the private part of the test dataset. For private test evaluation images would be processed with the participant's solution from the Docker container. Additionally, during the final checkpoint, the submitted solutions ranked among the top-10 based on the Toloka scores will proceed to the professional judgment stage. In this final stage, a professional photographer will provide his selection for the final winners, and he will also be asked to provide feedback on his decision. The final winners will be selected based on the combined scores of the Toloka and the professional photographer.

During the solution submission, the participants will have the option to make their Docker container open, i.e., publicly available after the challenge. This will be a prerequisite to be eligible for the money prizes described later. Nevertheless, even if the participants choose to keep their Docker container closed, they will still be eligible for winner certificates should they win one of the first three places, while the money prize will be passed on.

It is important to note that the evaluation of this challenge is subjective in nature, and the challenge organizers have designed it to be as fair as possible, given the nature of the task. The challenge organizers reserve the right to modify the evaluation procedures as necessary to improve the fairness of the challenge.

It should be noted that only those solutions are allowed that use open components available to all participants in the competition (at the time of the start of the competition). Proprietary solutions will be disqualified.

Submissions

As previously stated, for each evaluation checkpoint, participants will be permitted to submit 50 images in the JPEG format with high-quality compression. Each team may submit a maximum of two solutions. Submissions will be accepted through a Google form, which will be provided to registered teams.

We expect the images to have a size of 3464 x 5202 pixels for landscape orientation and 5202 x 3464 pixels for portrait orientation, in case of other sizes, images will be automatically scaled using simple linear methods. An example of the expected JPEG file format can be found in the data folder on the challenge repository.

For the final evaluation, the submission must include 50 processed images in the JPEG format, as well as a Docker container that contains the runnable solution for reproducing the submitted results. The solution will be evaluated on the public test dataset, which will be sent to the participants, and on the private part of the test dataset. For private test evaluation images would be processed with the participant's solution from the Docker container.

It is important to note that for both preliminary and final submission, participants must have a Google account in accordance with Google's policy.

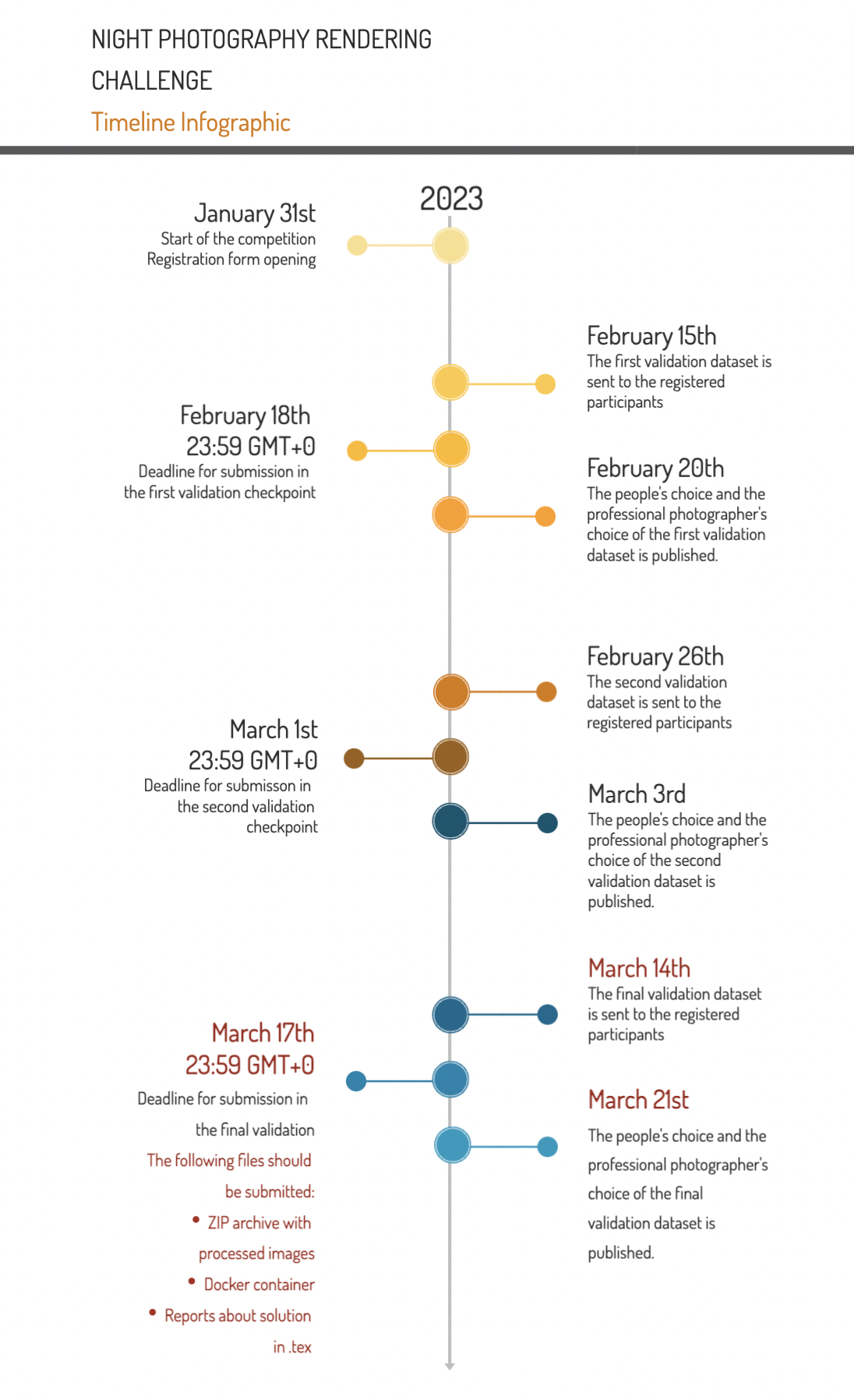

Timeline

* Please note that the timeline can be slightly changed, so it is advised to check the challenge web page over time.

Registered Teams

The list of 30+ registered teams is available here.

Reporting

In order to be eligible for the prizes, the participants will be required to send code and reports about their solutions in the form of short papers during the submission. If this report is not submitted, the participants that would otherwise win a prize will be passed over.Prizes

Winners will receive a winner certificate and will have an opportunity to submit their paper to NTIRE'2023 and participate in the common report which also will be submitted to CVPR workshop.

The photographer

RICHARD COLLINS is the commissioner for photography titles at Ilex Press, an imprint of Octopus Books and part of the Hachette publishing group.

RICHARD COLLINS is the commissioner for photography titles at Ilex Press, an imprint of Octopus Books and part of the Hachette publishing group.

Richard graduated from Rochester University in 1996 with a bachelor’s degree in photography and worked as a freelance photographer in the fields of fashion and portraiture in London and New York.

After switching careers to journalism he spent some time as the deputy editor of Practical Photography magazine, writing and researching articles on all aspects of photography and image-making.

As a commissioner at Ilex Press Richard works with a diverse range of authors and photographers creating both practical ‘how-to’ book as well as art reference manuals.

Richard has extensive experience in critical analysis of photographs and is a regular judge on the panel for the prestigious Landscape Photographer of the Year competition.

Q&A

If you still have any questions, please send an email: nightphotochallenge@gmail.com

Organizers

- Egor Ershov

- Georgy Perevozchikov

- Alina Shutova

- Ivan Ermakov

- Maria Efimova

- Arseniy Terekhin

- Nikola Banić

- Radu Timofte

Sponsorship